Peering into Privacy: A Deep Dive into the Monero Network Topology

Introduction

At ProbeLab, our mission is to provide high-fidelity data on decentralized infrastructures. Having extensively mapped ecosystems like Ethereum, IPFS, and Filecoin, and more, we are excited to announce that we have successfully extended Nebula, our flagship network crawler, to support the Monero network. This expansion allows us to go beyond simple node counts and delve into the structural health, geographic distribution, and security posture of the Monero ecosystem.

While platforms like monero.fail and moneronet.info provide excellent real-time data on public nodes and network health, our objective with Nebula is to provide another methodology for the P2P layer allowing us to identify nuances in node behavior and infrastructure concentration that were previously not analysed or invisible.

Monero is, by design, one of the most privacy-preserving financial networks. At the transaction layer, the cryptography is strong and the privacy guarantees are real with techniques like ring signatures or stealth addresses that obscure senders and hide recipients respectively. But a network is more than its transaction protocol and at the P2P layer, Monero looks remarkably similar to other decentralized systems that we at ProbeLab have investigated before. Nodes expose IP addresses, handshakes expose metadata, peer lists get shared openly, and behavioral patterns that, if you know how to read them, can tell you quite a lot.

The gap between Monero’s reputation for opacity and the relative transparency of its networking layer is exactly why we decided to look deeper.

In this post, we’ll share a snapshot of the current network state, explain the methodology behind our crawl, and address the unique challenges and controversies that come with monitoring one of the world’s leading privacy crypto-networks/currencies. We're publishing this as a neutral snapshot, not an advocacy piece. What follows is a data-driven account of what we found.

Methodology

The Levin Protocol

Monero's P2P layer uses a custom binary protocol called Levin. To crawl the network, we had to implement a subset of this protocol capable of performing handshakes and requesting peer lists. While the Monero daemon is written in C++, Nebula is Go-based. We leveraged the foundational work of cirocosta/go-monero, adapting and extending its logic to handle the requirements of our network crawler.

The Levin protocol frames messages with a header that encodes an 8-byte signature, payload length, flags, command ID, and return code. The payload itself is serialized using the Portable Storage binary format.

The command IDs we care about for crawling are:

HANDSHAKE(1001): The first thing two nodes exchange. Each side sends its node data (e.g., node ID, port information, flags, etc.) and a list of peers it knows about with up to 250 entries by default. This is the primary source of peer discovery during a crawl.PING(1003): A lightweight liveness check. Importantly, the response contains the remote node's peer ID.

The handshake peer exchange is how we build our graph. Each handshake gives us up to 250 candidate addresses to connect to next, out of a larger pool of peers that a remote peer maintains.

How Nebula Crawls the Network

Nebula maintains a queue of (IP, port) pairs to dial. Starting from the canonical seed nodes, Nebula performs a recursive walk executing the following steps:

- Open a TCP connection.

- Execute the Levin handshake to discover new peers.

- Execute a Levin ping to verify identity.

- Perform a

get_infoRPC call if applicable.

Tracking both the handshake peer ID and the ping peer ID independently for every node turns out to be important as we’ll see shortly.

Crawls are run from the most reliable and stable AWS region us-east-1.

A full crawl of the reachable network completes in around 9 minutes depending on connection timeouts. For this snapshot, we analyzed data

from a representative crawl on 2026-02-24, enriched with geographic and

infrastructure metadata from our GeoIP provider ipregistry.co.

We've put a complete data point of crawling a single node in the Appendix below to potentially inspire different analyses than presented here.

Limitation

It is important to note that Nebula only maps the reachable network. Nodes behind restrictive firewalls or NATs that do not allow inbound connections are "invisible" to a crawler, even if they are active participants in the gossip protocol. A node is considered "Reachable" in our data only if it successfully completes a full Levin handshake. All graphs below show only nodes that are reachable according to this definition.

Identifying Spy Nodes

When a Monero node starts, it generates a random peer ID that identifies it on the network. Legitimately, this ID should be consistent, meaning a node that tells you its peer ID during the handshake should return the same ID when you ping it.

According to the Monero Research Lab, if these two values differ it's a strong signal of anomalous behavior. The most likely explanation is a proxy or surveillance node that accepts your connection, performs a handshake (possibly replaying a peer ID it learned elsewhere), but routes your ping to a different backend or generates a fresh ID on the fly, likely for network-wide transaction monitoring. This pattern is associated with network monitoring infrastructure that wants to appear as many distinct nodes while running from fewer actual hosts.

The methodology and additional rationale is explained in Boog900/p2p-proxy-checker

Ban Lists

To avoid connecting to such spy nodes Monero’s research community publishes a list of IP addresses and IP ranges that were previously identified to exhibit the Spy node behaviour. The idea is that nodes will load this ban list and disconnect from or not connect in the first place to nodes that appear in that list or any of the IP ranges.

We detect ban list adoption indirectly: if a node returns zero peers from the ban list during its handshake, it either enforces the ban list or simply has never encountered those nodes. We acknowledge that this is imperfect because a node only shares at most 250 peers in a handshake while maintaining a bigger list locally. This means we may get lucky and only receive non-banned nodes by pure chance. We’ll go with it anyway as a first order approximation.

Network Snapshot

Note on Scope: The following graphs show a snapshot of the current state of the network and is not a longitudinal study. The interesting work comes from running this continuously and watching the metrics change over time. All data below is from a crawl conducted on 2026-02-24 starting at 10am UTC, completing in 9 minutes.

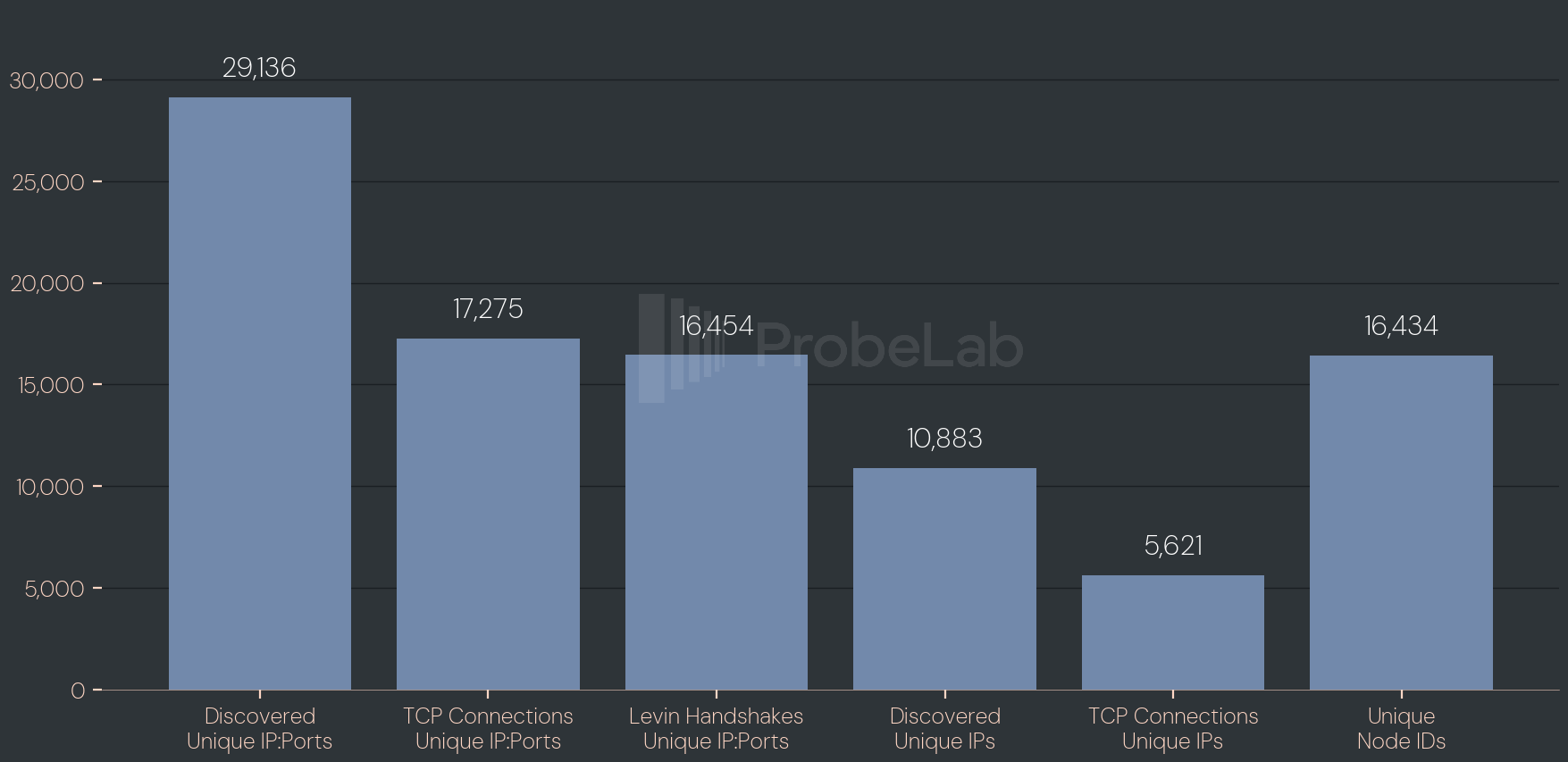

Network Size

The figure above presents key metrics from a single network crawl. We discovered over 29,000 unique IP:Port pairs, of which we successfully established TCP connections to roughly 60%. The remaining 40% either actively refused our connection attempts or simply never responded before timing out (see next graph).

Out of the nodes that accepted our TCP connection, we completed a Levin handshake with around 95% of them (16,454). This is a strong success rate suggesting most reachable nodes are well-behaved and protocol-compliant. The following bar shows the number of unique IP addresses, which is substantially lower than the total IP:Port count. This discrepancy points to one of two things: either multiple nodes are co-located behind the same IP address, or the network experiences enough churn that nodes restart, receive a fresh port from the OS, announce it to their peers, and leave stale records behind before the network has a chance to prune them.

The ratio of successfully connected to total discovered unique IPs sits at around 51%. The number of unique node IDs closely mirrors the number of completed handshakes. Yet it comes in slightly lower. This may hint at distinct IP:Port pairs occasionally advertising the same node ID, suggesting either deliberate multi-homing or nodes cycling through addresses while retaining their identity.

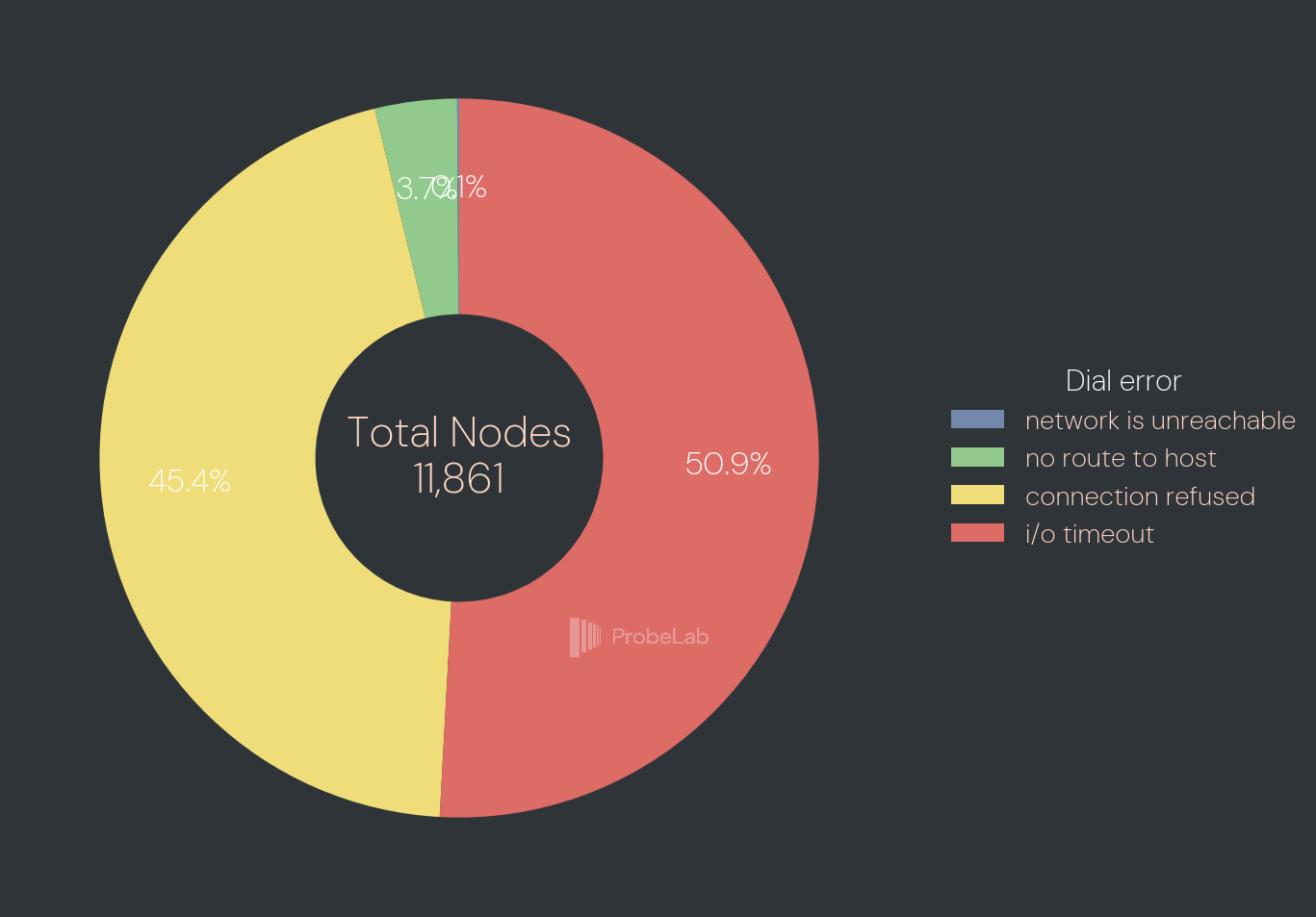

Connection Errors

To better understand that unreachable 40%, the figure above breaks down the connection failures across 11,861 nodes by error type. The split between the two dominant failure modes is remarkably even: just over half (50.9%) of failed connections resulted in a timeout, meaning the node was either behind a firewall, had gone offline, or was simply too slow to respond within our deadline of 30s. The remaining 45.4% returned an explicit connection refused error which is a signal that the process is no longer listening on that port, reinforcing the churn hypothesis raised earlier. A small fraction of nodes were outright unreachable at the routing level, with 3.7% returning no route to host and a negligible 0.1% triggering a network is unreachable error. Together, these routing-level failures likely reflect stale records pointing to IPs that have since been reassigned or taken offline entirely.

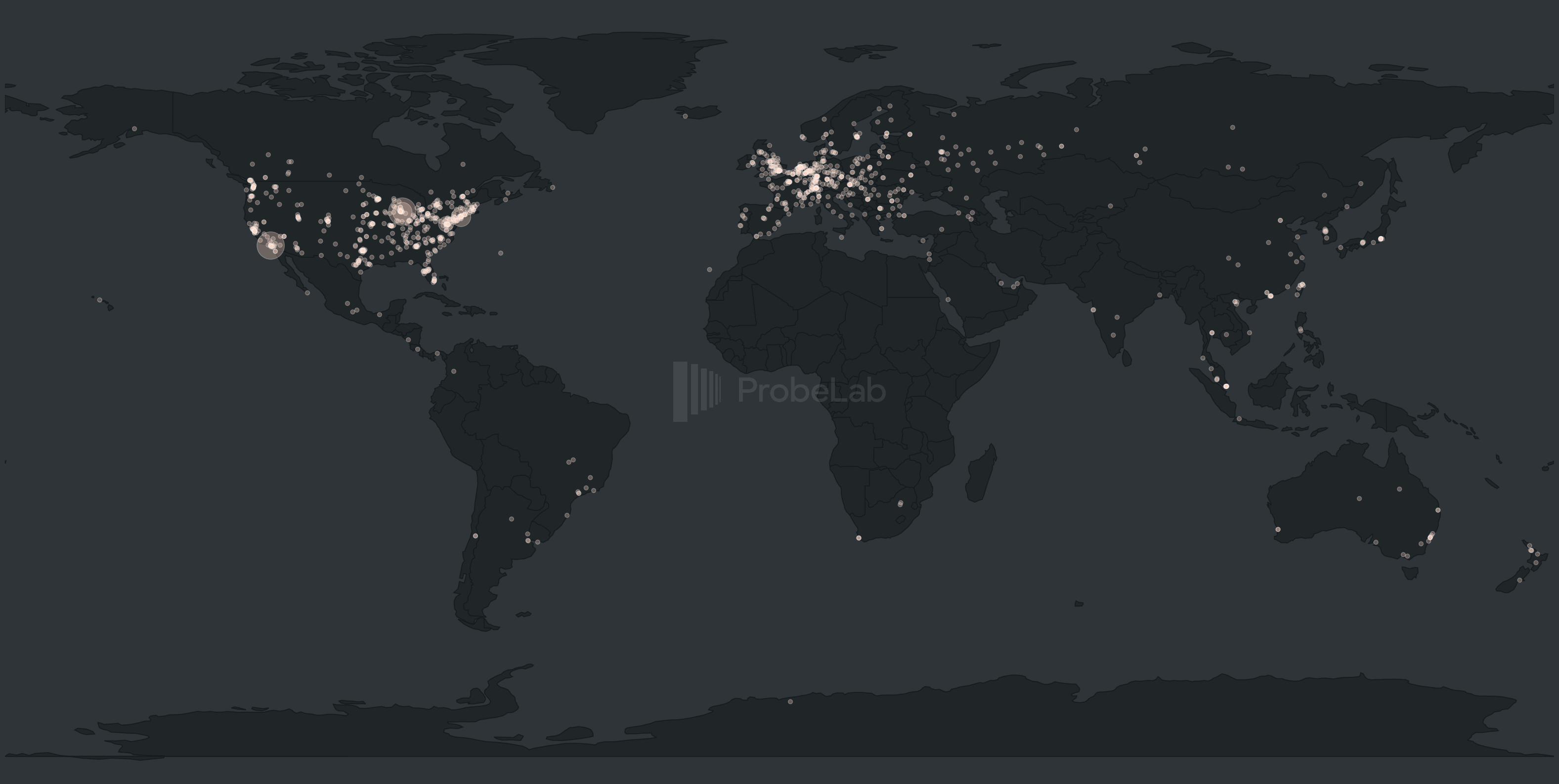

Geographical Distribution

The map above illustrates the geographic distribution of all nodes we successfully handshaked with. At a glance, the Monero network mirrors the footprint of other major decentralized protocols, with heavy concentrations in North America and Europe. While the map largely aligns with the data from monero.fail, a closer look at specific discrepancies highlights the challenges of active network crawling and the inherent "fuzziness" of IP geolocation.

When comparing datasets, we noticed that monero.fail reports a node in the Azores (IP: 82.154.132.50) that is absent from our map. Investigating our raw data, we found this node in our "failed" list where Nebula consistently hit a "no route to host" error when attempting to dial it. The fact that other tools can "see" a node that our crawler cannot reach underscores how network topology and routing can vary based on the observer's location. This variability could be countered with multi-vantage point crawling as a future enhancement for our deployment.

Another interesting outlier is IP 185.146.232.4. While monero.fail places this node in St. Denis (Réunion), our data (sourced from ipregistry.co) mapped it to Romania. To dig deeper, we cross-referenced this across several major providers: IP2Location, IPInfo, and ipgeolocation.io all agreed on Romania, while MaxMind mapped it to Reykjavik, Iceland. Geolocation is not a hard science but instead a heuristic exercise based on provider databases that can be outdated or conflicting.

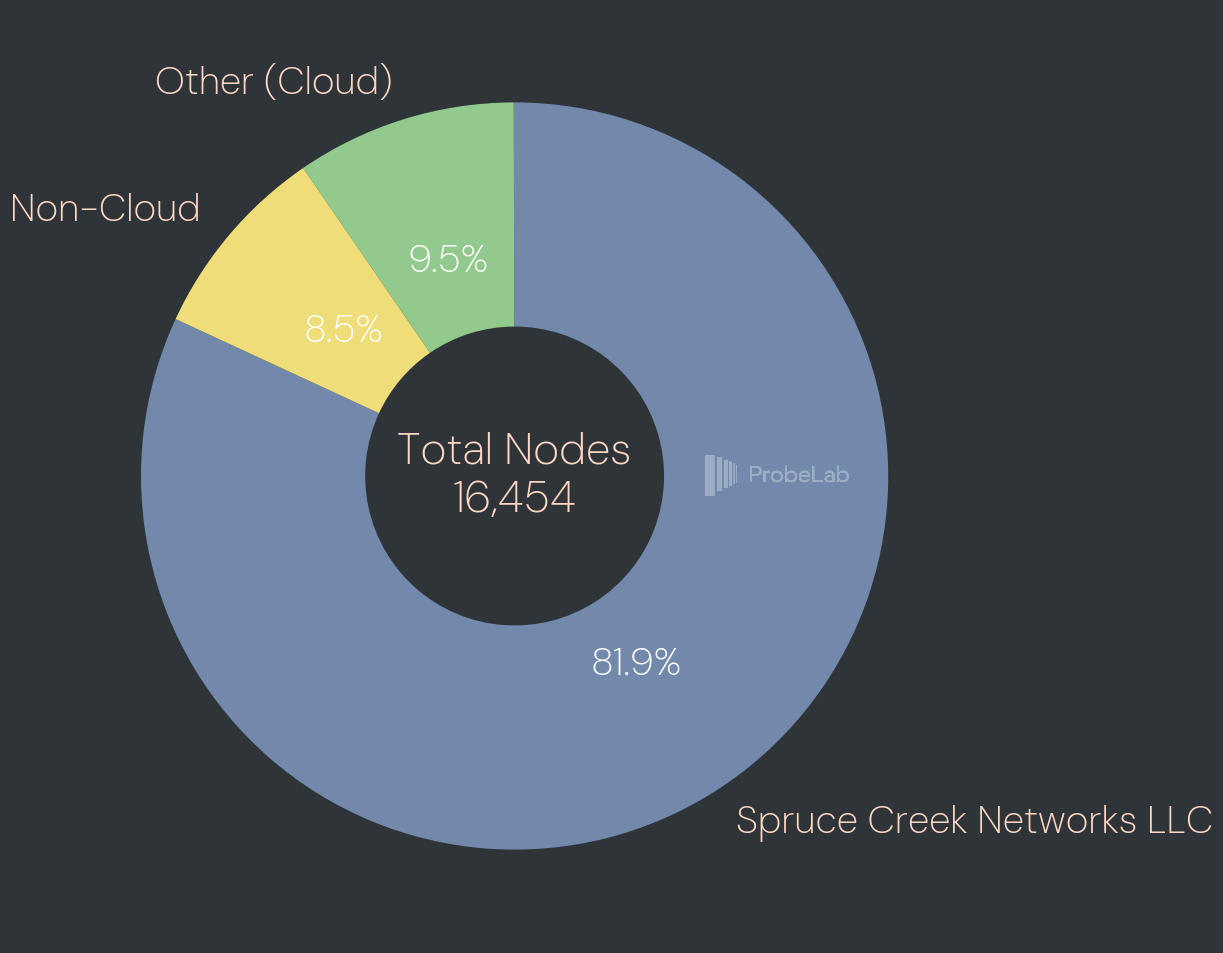

Cloud Provider Distribution

The concentration of the Monero network within specific hosting infrastructures reveals a significant point of centralization. As shown in the pie chart above, over 80% of reachable nodes are running within a single cloud provider: Spruce Creek Networks LLC. While the remaining ~3,500 nodes operating outside of this provider still represent a robust peer count compared to many other decentralized networks. Still, such a heavy reliance on one entity is a clear point of concern for the network's resilience and diversity.

The following table breaks down the top 10 providers by node count and percentage share. Beyond the dominant lead of Spruce Creek, the network becomes much more fragmented. Hetzner holds the second-largest share at approximately 2.1%, followed by DigitalOcean and OVH, with all other providers falling below the 0.75% mark.

| Cloud Provider | Node Count | Share (%) |

|---|---|---|

| Spruce Creek Networks LLC | 13,482 | 81.94% |

| Hetzner Online GmbH | 342 | 2.08% |

| DigitalOcean, LLC | 275 | 1.67% |

| OVH SAS | 123 | 0.75% |

| Comcast Cable Communications, LLC | 96 | 0.58% |

| AT&T Enterprises, LLC | 70 | 0.43% |

| Google LLC | 59 | 0.36% |

| Contabo GmbH | 55 | 0.33% |

| Charter Communications INC | 47 | 0.29% |

| Verizon Business | 41 | 0.25% |

This extreme concentration in a single provider is a phenomenon already known to the Monero community. It’s speculated that the Spruce Creek was created specifically for surveillance.

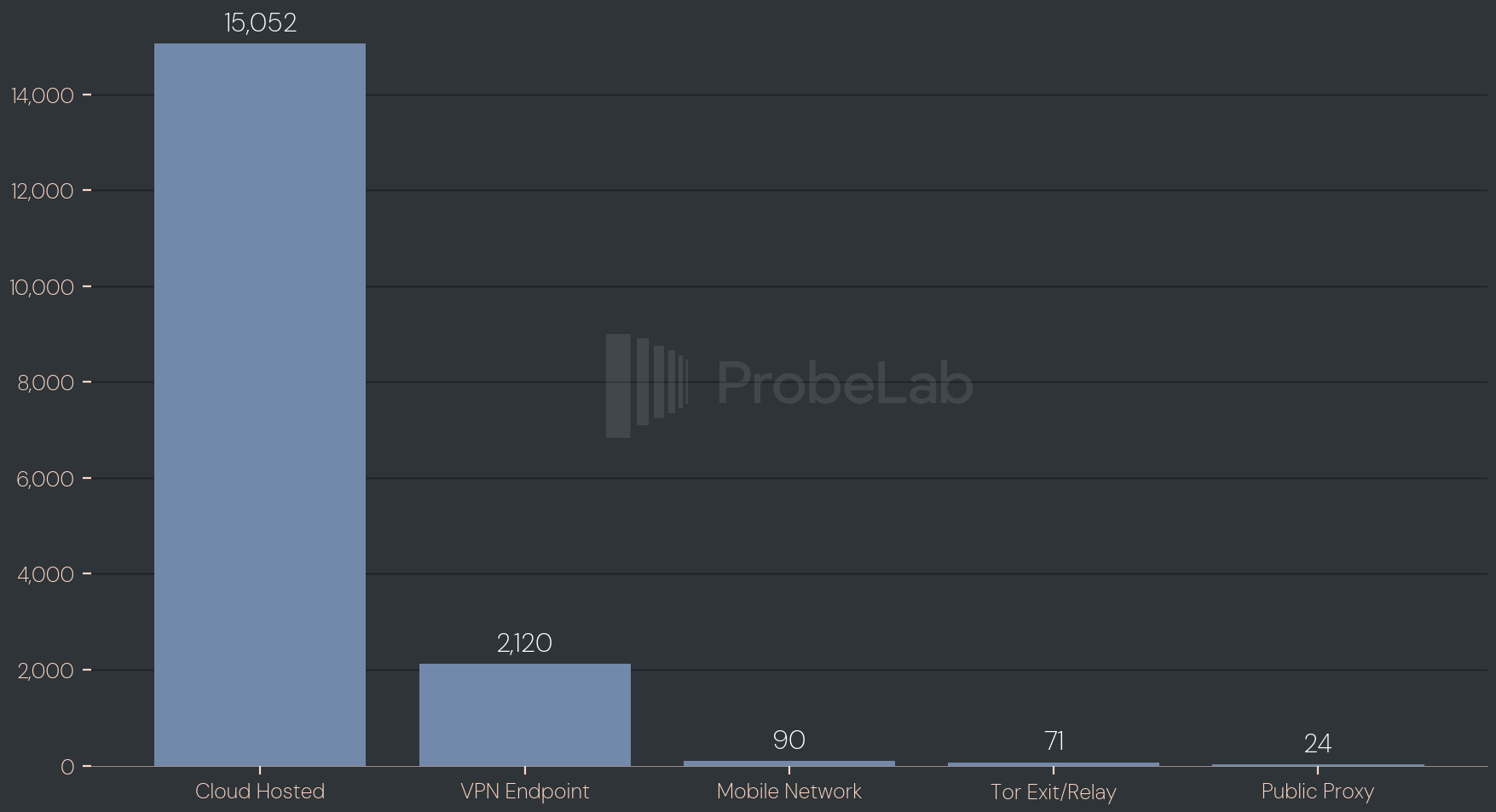

Endpoint Tag Distribution

The above chart categorizes nodes by the type of infrastructure they run on. It shows whether nodes run in a cloud environment, a VPN endpoint, a mobile network, a Tor exit or relay node, or a public proxy such as Apple Private Relay. It's worth noting that these categories are not mutually exclusive as a single IP address can simultaneously map to a cloud provider and constitute a VPN exit point. The dominance of cloud-hosted nodes here takes on a different weight when considered alongside the Spruce Creek finding, where the vast majority of that cloud infrastructure traces back to a single provider suspected of operating for surveillance purposes, meaning the category that appears most 'normal' is arguably the most concerning. The presence of Tor and VPN nodes, while small, represents the slice of the network most privacy aware.

Archival Node Distribution

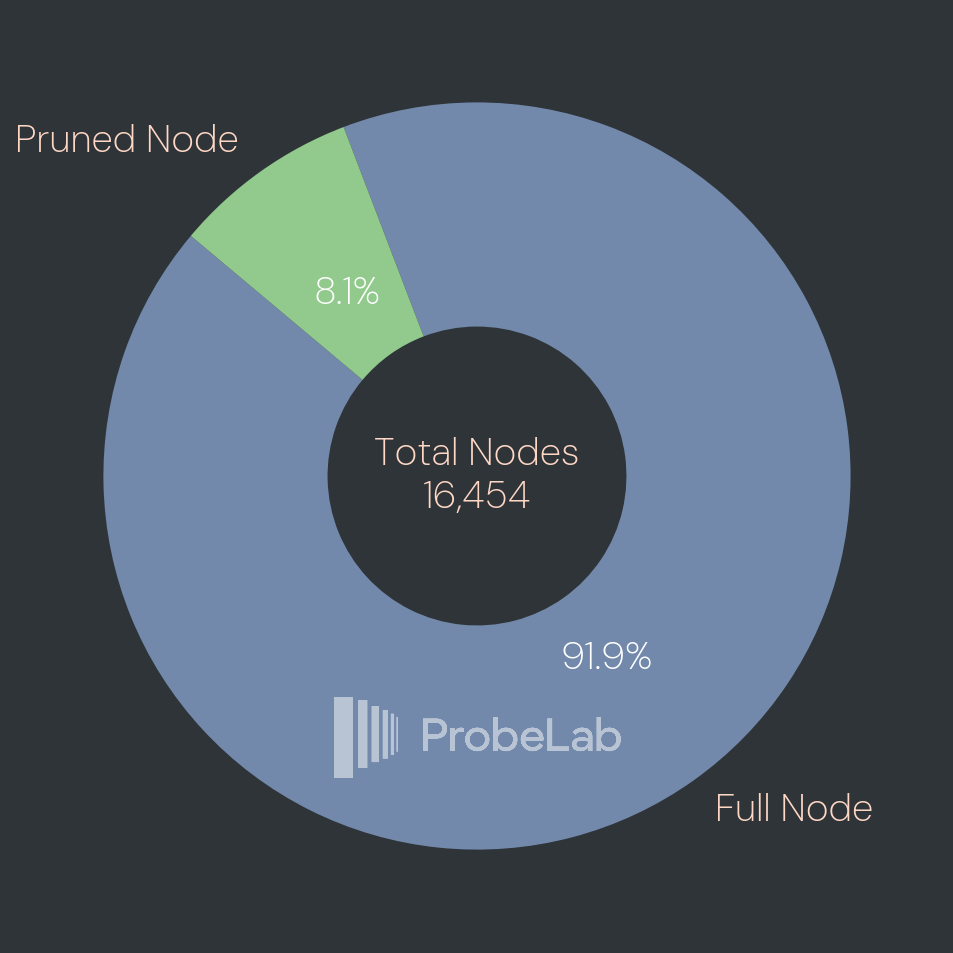

This chart breaks down archival (“full”) vs pruned Monero nodes using the pruning seed that each peer reports during the Levin handshake. In Monero’s protocol, a pruning seed of 0 is the signal for a full node (stores the entire chain), while any non‑zero seed indicates a pruned node (stores only a subset of historical blocks, chosen deterministically from that seed). Aggregating those labels gives the distribution shown above. Out of 16,454 handshaked nodes, 15,125 (91.9%) present as Full Nodes, and 1,329 (8.1%) present as Pruned Nodes. Practically, this means most reachable peers in this crawl claim to be able to serve deep historical data, while a smaller fraction optimizes for disk usage and bandwidth by pruning, which can affect how well the network can satisfy requests for older blocks from random peers.

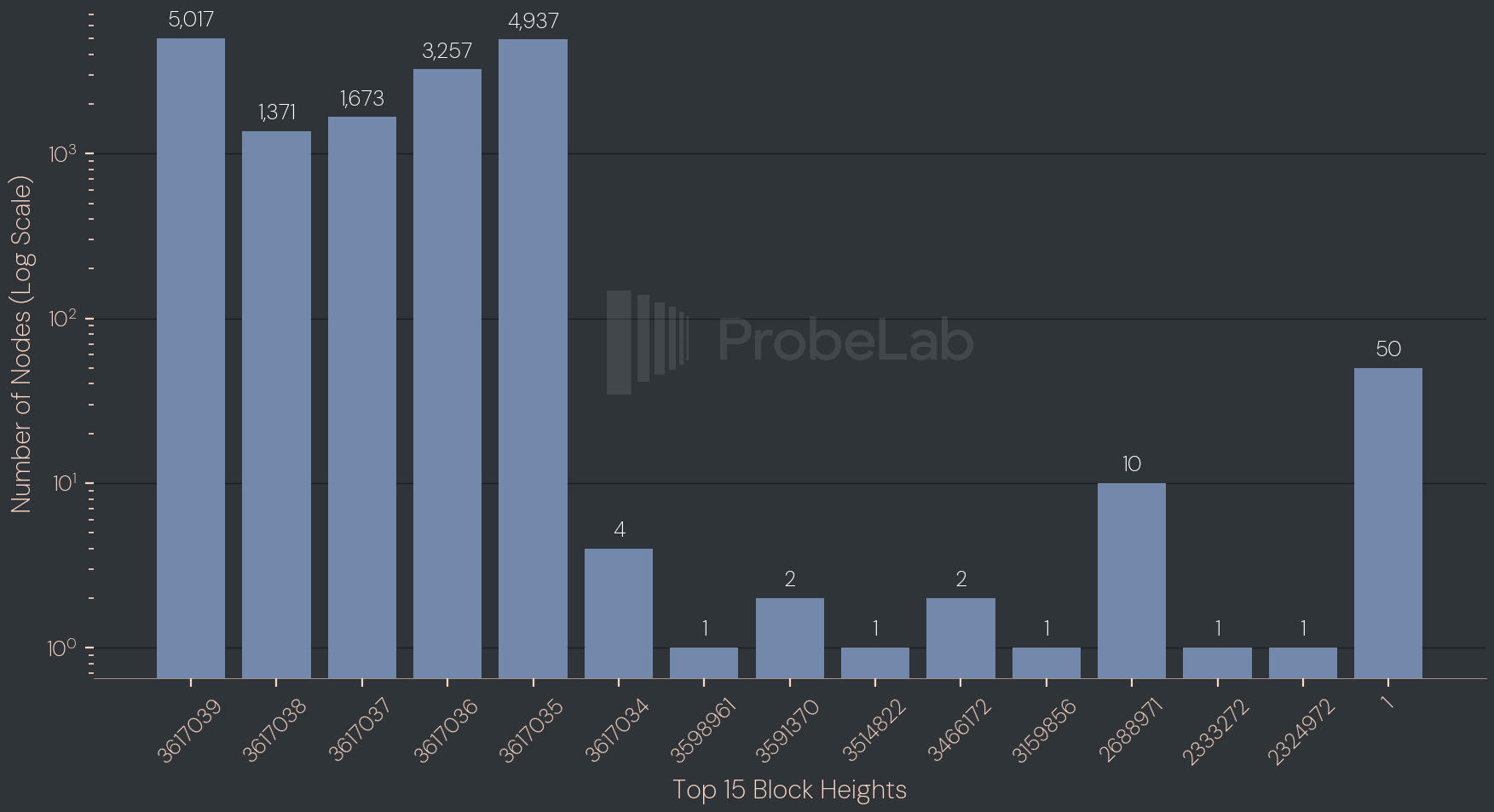

Network Sync Status

The chart shows the distribution of reported block heights across observed nodes, with the number of nodes plotted on a logarithmic scale. Only the top 15 block heights are shown which covers over 99% of all unique reported block heights. Most of the network is concentrated in a tight cluster around the current tip where several adjacent heights each attract thousands of nodes, indicating that the majority are broadly synchronized and only a handful of blocks apart. The fact that the peak is spread across neighboring heights (rather than a single spike) is typical, reflecting normal propagation delays and the reality that nodes are queried at slightly different moments. With a block time of 2 minutes and a crawl duration of ~9 minutes it makes sense to observe four different heights.

Away from the tip, the graph exhibits a clear long tail. A small number of nodes report much older heights, sometimes only a handful at each value. These outliers may constitute nodes that are still syncing, intermittently reachable, stalled, or otherwise operating under constraints.

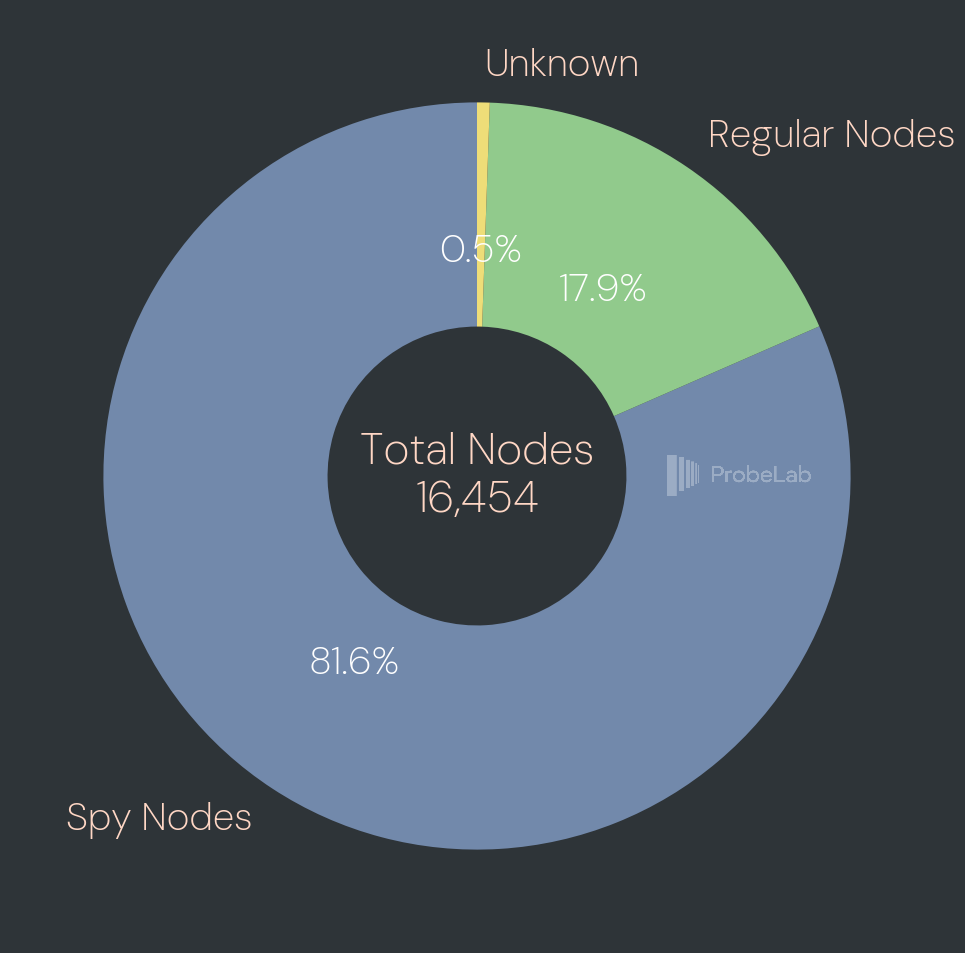

Spy Nodes

This chart shows what that handshake-vs-ping peer ID mismatch looks like at network scale. Out of 16,454 nodes that completed a Levin handshake in this crawl, 13,420 (81.6%) were identified as Spy Nodes based on the inconsistent peer ID signal, while only 2,944 (17.9%) behaved like regular nodes and 90 (0.5%) were left as unknown which can happen if the crawler was able to perform a handshake but the connection was closed before we’ve got a ping back.

The most striking part is the size of the suspicious slice plus how concentrated it is: every flagged spy node in this dataset resolves to infrastructure running inside the Spruce Creek Networks cloud environment.

We also checked whether we could identify spy nodes that are not included in the ban

list. We found a single IP address where the peer IDs didn’t match and which was not

included in the ban list: 69.17.52.1 (Again, part of the Spruce Creek Networks LLC).

All other spy nodes were already covered by the ban list. The community recommends running a node while subscribed to a

ban list but how many are actually doing that?

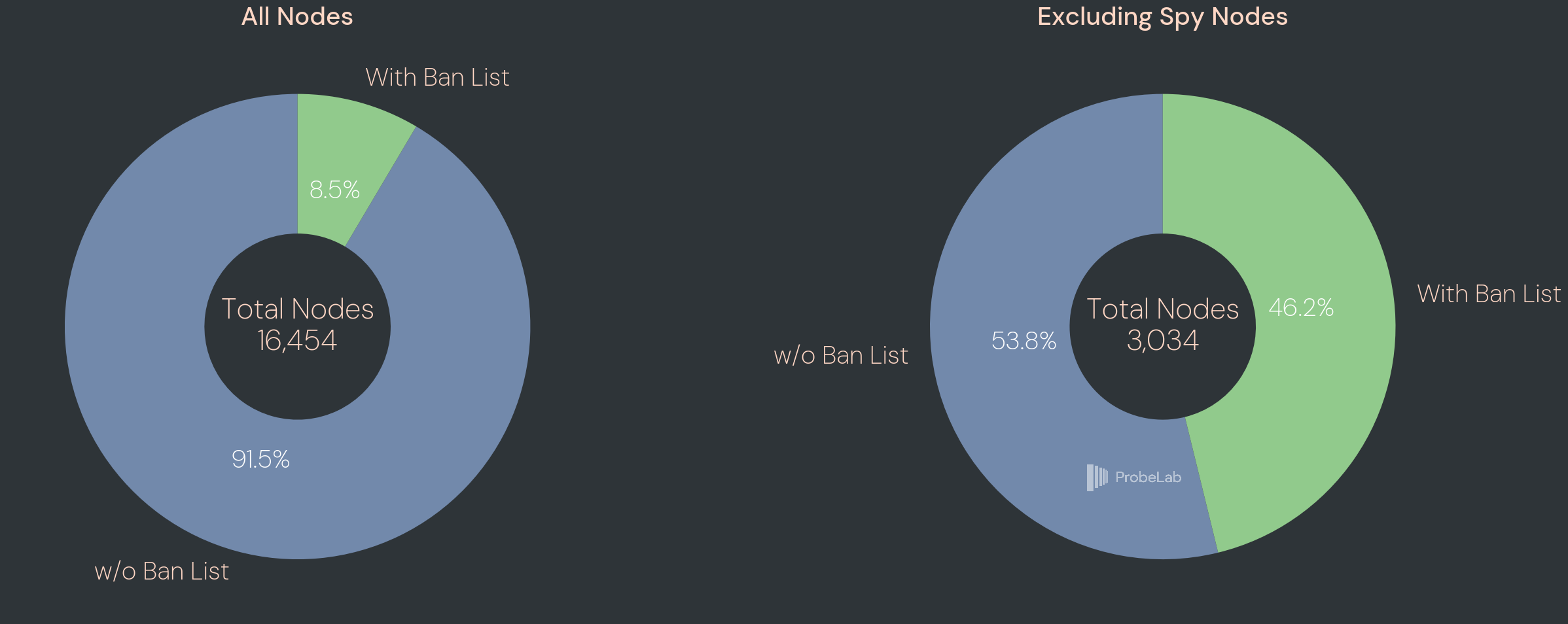

Ban List Adoption

The two charts above show ban list adoption across the network, first across all 16,454 nodes and then with spy nodes excluded. Looking at the full network, the picture appears discouraging: only 8.5% of nodes appear to enforce the ban list, while 91.5% do not. However, this figure is heavily skewed by the sheer volume of spy nodes, which unsurprisingly do not adopt a list designed to block themselves.

When spy nodes are removed from the picture, the numbers shift considerably. Out of the remaining 3,034 legitimate nodes, 46.2% show signs of ban list enforcement, compared to 53.8% that do not. This means that nearly half of the non-adversarial network has taken active steps to isolate known surveillance infrastructure, which is a meaningful level of community response. Still, with just over half of legitimate nodes operating without the ban list, a significant portion of the honest network remains exposed to connections with spy nodes on every crawl.

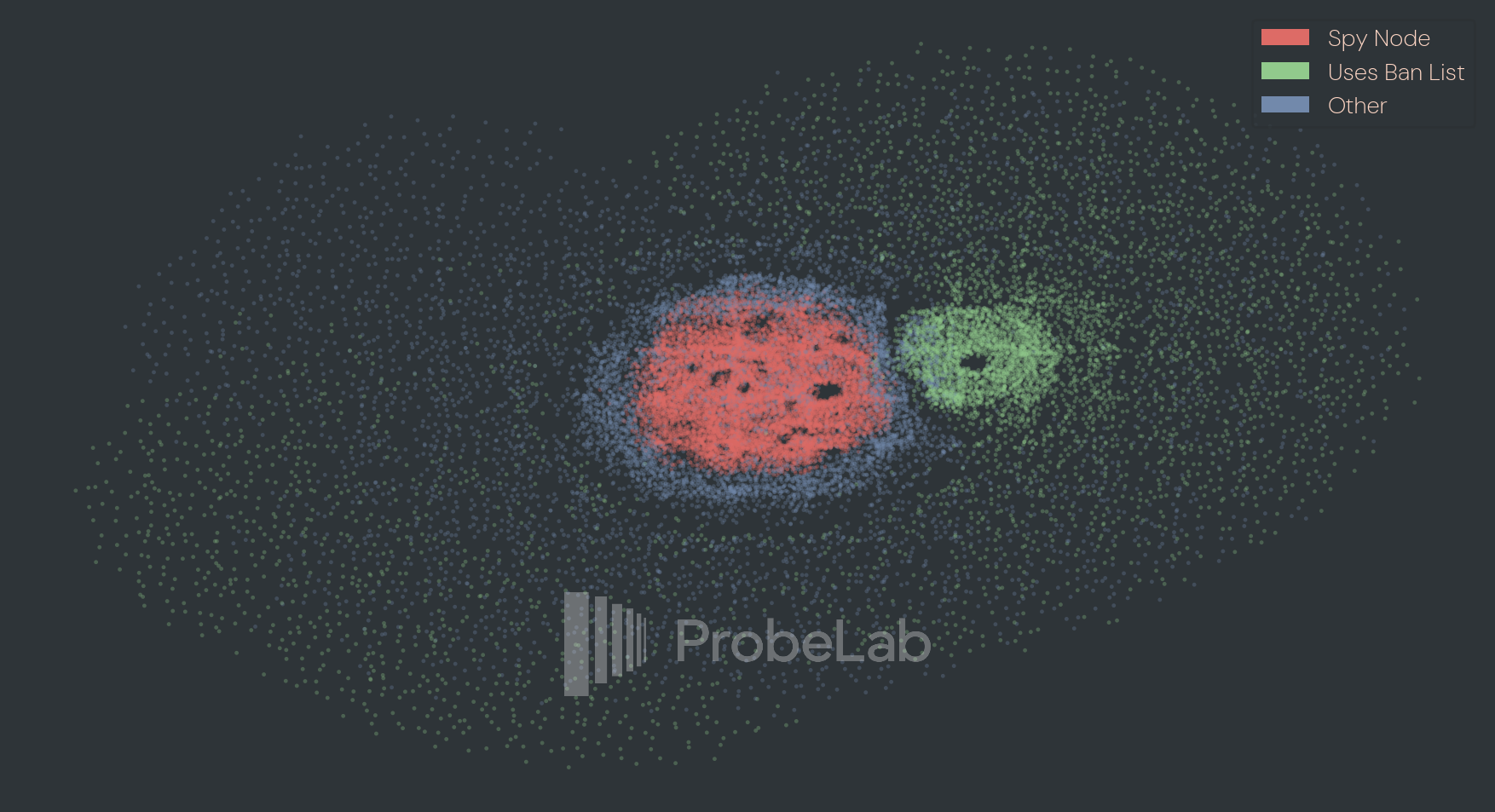

Overlay Topology

Ban list adoption has real implications as it shapes the overlay topology, determining who connects to whom, which in turn affects both block propagation latency and the surveillance effectiveness that the ban list is designed to disrupt.

The figure above visualizes this directly. It shows a force-directed graph of peer connections discovered during the crawl, where each node is a peer and edges connect nodes that were exchanged during handshakes. If peer A told us about peer B, they are linked. Running a force simulation on this graph reveals whether natural clusters emerge from the connection structure alone. Three colors distinguish node types: red for spy nodes, green for nodes enforcing a ban list, and blue for all others.

The result is an overlay topology that is far from uniform. At the center sits a dense red cluster of tightly interconnected spy nodes with strong mutual connections and a surrounding halo of blue nodes that have not adopted the ban list, and are therefore fully visible to and peered with the adversarial infrastructure. Offset from this core is a smaller but cohesive green cluster, where ban list nodes appear to have organically gravitated toward one another, forming a more segregated neighborhood with reduced exposure to the red cluster. The sparser outer peers, loosely tethered to the core, likely represent newer or less-connected nodes that haven't yet accumulated many peers.

The ban list is clearly working for those who use it but the topology also shows the cost of incomplete adoption. An open question for future work is how this structural separation translates into measurable differences in block propagation speed and the practical effectiveness of deanonymization attempts.

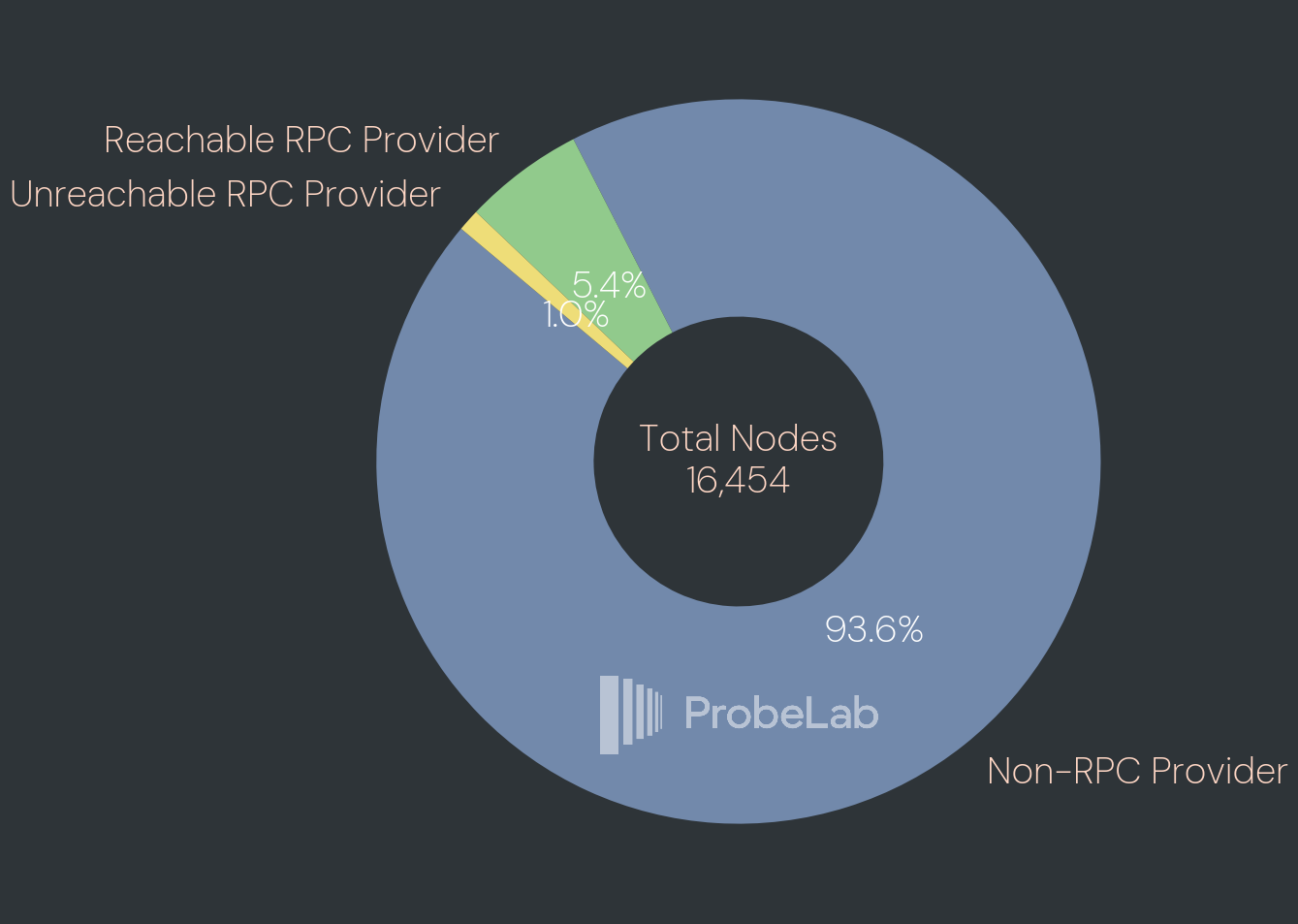

RPC Providers

The above graph shows how many nodes expose an RPC endpoint to query. We identify 883 or 5.4% of the network exposing their RPC endpoint publicly while an additional 1% or 162 nodes claim to provide RPC service while we were not able to query the API. Excluding Spy Nodes the number of reachable RPC and unreachable providers jumps to 30.0% and 5.5% respectively.

Looking Ahead

This post is a snapshot. What would make it genuinely useful is continuity and running the crawl periodically with a similar frequency as our other monitored networks (every 2h). This would allow us to observe how the network evolves after upgrades, after adversarial events, or after community interventions like ban list updates.

This snapshot confirms that while Monero’s transaction privacy remains robust, its P2P layer is currently dominated by a singular, suspicious infrastructure provider.

Our next technical objective is to measure how this spy node density impacts Dandelion++ propagation. We have existing tooling for GossipSub propagation metrics from our work on Ethereum, Filecoin, Base, and Optimism. We want to adjust our infrastructure to quantify how the surveillance layer impacts the anonymity of transaction origins in practice, measure propagation latency and resource consumption.

We are planning to engage with the Monero community to determine the best path for long-term, public data transparency for these metrics.

Come Work With Us

If you're interested in working with us on custom analyses or specific data access, reach out via our Contact page.

Appendix

Full Data Point

A full data point for a single node looks like this:

{

"peer_id": "1GWU6v...", // libp2p PeerID, identity codec encoding IP:Port

"dial_maddrs": [

"/ip4/{IP}/tcp/18088"

],

"connect_maddr": "/ip4/{IP}/tcp/18088",

"connect_duration": "40.056875ms",

"crawl_duration": "1m47.341159709s",

"visit_started_at": "2026-02-24T08:52:50.378807+01:00",

"visit_ended_at": "2026-02-24T08:54:37.720262+01:00",

"connect_error_str": "",

"crawl_error_str": "",

"properties": {

"discovery_peer": { // data that we learned from another peer

"ip": "{IP}",

"port": 18088,

"addr_type": 1

},

"handshake_duration": "3.135166458s",

"handshake_node": { // data that we got during the handshake with this peer

"id": {PEER_ID},

"rpc_port": 18081,

"current_height": 3616952,

"top_version": 16,

"network_id": "EjDxcWEEQWEXMQCCFqGhEA==",

"support_flags": 1,

"my_port": 18088,

"top_id": "2rXADyVvR/dmDdhGocsVOdmPv3/hhKLEdlkKxgxnzzI=",

"cumulative_difficulty": 591992395292397987

},

"ping": { // the ping response

"peer_id": {PEER_ID},

"status": "OK"

},

"ping_duration": "30.29925ms",

"retries": 3, // how many times we tried to connect, handshake, ping until all three have succeeded

"rpc_duration": "4.974665542s",

"rpc_info": { // the response of the get_info RPC endpoint

"adjusted_time": 1771919569,

"alt_blocks_count": 0,

"block_size_limit": 600000,

"block_size_median": 300000,

"block_weight_limit": 600000,

"block_weight_median": 300000,

"bootstrap_daemon_address": "",

"busy_syncing": false,

"cumulative_difficulty": 591992395292397987,

"cumulative_difficulty_top64": 0,

"database_size": 273804165120,

"difficulty": 693118605363,

"difficulty_top64": 0,

"free_space": 18446744073709551615,

"grey_peerlist_size": 0,

"height": 3616952,

"height_without_bootstrap": 0,

"incoming_connections_count": 0,

"mainnet": true,

"nettype": "mainnet",

"offline": false,

"outgoing_connections_count": 0,

"rpc_connections_count": 0,

"stagenet": false,

"start_time": 0,

"synchronized": true,

"target": 120,

"target_height": 0,

"testnet": false,

"top_block_hash": "dab5c00f256f47f7660dd846a1cb1539d98fbf7fe184a2c476590ac60c67cf32",

"tx_count": 58363913,

"tx_pool_size": 63,

"update_available": false,

"version": "",

"was_bootstrap_ever_used": false,

"white_peerlist_size": 0,

"wide_cumulative_difficulty": "0x8372df26f22d1a3",

"wide_difficulty": "0xa161169833",

"status": "OK",

"untrusted": false

}

}

}

For most of the peers we’re only getting partial information because, e.g., they don’t expose the RPC endpoint or we were able to open a TCP connection but not to perform a handshake. It’s fuzzy. As mentioned, following the collection of the above data we enrich the data points with Geo information from ipregistry.co based on the discovered IP address. The additional fields include:

{

"ip": "{IP}",

"resolvedAt": "2026-02-24T14:53:16.467224476Z",

"continent": "AS",

"country": "TH",

"city": "Phuket",

"latitude": 7.89062,

"longitude": 98.39807,

"isCloud": false,

"isVpn": false,

"isTor": false,

"isProxy": false,

"isBogon": false,

"isRelay": false,

"asn": 23969,

"company": "TOT Public Company Limited",

"isMobile": false,

"type": "business",

"domain": "tot.co.th"

}

On Monero's Reputation

Monero occupies a complicated position in public discourse. It's the most technically credible privacy crypto-network/currency, and it's also the one most frequently cited in the context of illicit activity like ransomware payments, darknet markets, or sanctions evasion. That association is not fabricated, as privacy tools attract adversarial use, and Monero is no exception.

Privacy is not inherently criminal. Every privacy-preserving technology faces the same dual-use reality, and the existence of misuse does not invalidate the legitimate need that motivated the technology in the first place. Financial privacy matters to the dissident receiving funds under an authoritarian regime, the patient purchasing medication in a country that criminalizes their condition, and the ordinary individual who simply believes financial privacy is a fundamental right.

Our work sits entirely outside this debate. We do not touch the transaction layer. We do not attempt to trace, deanonymize, or attribute payments. What we measure is the P2P topology: which nodes exist, where they are hosted, and whether they behave consistently with the protocol. This is the same class of infrastructure research that is conducted routinely and without controversy for Bitcoin, Ethereum, and every other major decentralized network.

Related Posts

Optimistic Provide: How We Made IPFS Content Publishing 10x Faster

How a statistical approach cut IPFS content publishing times by over one order of magnitude.

IPFS Gateway Performance Measurements

The ProbeLab team has been busy building new IPFS Gateway performance measurements

ProbeLab's 2025 Review and 2026 Outlook

What we've achieved in 2025 and what we're targeting in 2026